In this level 0 post, we will investigate Newton’s method for finding the roots of a function. Then, we will see how this process can induce chaos and find some fractals hidden inside.

Introduction

Many people won’t remember their entire high school algebra class, but the pieces that stick out are things like finding equations of lines and the quadratic formula. While I do know some people who learned that there is no quintic formula, I have never heard of anyone being taught the cubic or quartic formulas. And that makes sense. Those formulas are large, unwieldy beasts. For this reason, whenever students encounter these higher-degree polynomials, there is always some “obvious” factorization to do beforehand, after which the student is either done or uses the quadratic equation.

The emphasis on finding the roots of a polynomial (or any function) is that it is a universally-applicable method of solving an algebraic equation. For example, the quadratic formula doesn’t just tell you where a parabola equals 0. It gives the location of the intersection between two parabolas. The line is just a parabola where the coefficient of

is 0. You could also find the points intersection between your parabola and some other line or parabola, but the rules of algebra tell you that you can always push everything to one side of the equation so that at the end, you are just finding the zeroes of a parabola.

We can’t always provide an exact answer to the question of where two functions intersect, even in what seem like simple situations. But this problem is important in science, for example to our understanding of quantum mechanics (in the link: the available energy levels of the system are determined by the intersection of the curves in the graph). In these instances, we must use approximation methods. There are plenty of these root finding algorithms, but in this post, we will look at the method first described by Isaac Newton, though his description is much more convoluted than how we talk about it now.

Newton’s Method

Newton’s method is a way of approximating the zeroes, or roots, of a function. The basic procedure is quite simple. You start with a guess, almost anything will do, and call that . Now we want to use the properties of the function to get closer to a zero of the function. So what we do is draw a tangent line to the function at

, and then find where that tangent line crosses the

-axis. This new point is what we will call

. From here, we repeat everything we did before, now using

as our guess. This process is visualized in the animation below.

This process can be mathematized in the following way. Define to be the slope of the tangent line at

. Then, by recalling that the slope is the change in the height divided by the size of the horizontal step, we can reason how to find the next guess. Since we want to find the zero of the function, we want the change in height to be

. Then, we use the slope equation to find the necessary change in

.

If we call the current guess and the next guess

, then we can obtain

by adding the above change in

to

,

To find a root, we just use this equation over and over again. Once we have iterated Newton’s method to our heart’s content, we should have a decent approximation of a root of our function. Plus, we will know how well we did in our approximation because we can just evaluate the function at our final guess and see how close to 0 we get.

There are some circumstances in which this method does not work as intended. For example, the tangent line could be perfectly horizontal, meaning that there is no intersection with the -axis. There is also the possibility that there are no roots. For example, the function

has no roots on the real number line. There are even cases where the algorithm just jumps back and forth between a couple values of

, never approaching a root, and a very specific case where the guesses get further and further from the root (for an example, try the cube root function). There are ways of remedying these situations, mostly by nudging the

-value away from problem points, or in the case where there are no real solutions, nudging the

-value off of the number line. We won’t say much more about when Newton’s method fails. If you are interested, you can check the Wikipedia page (but be warned, there are spoilers).

Going back to high school algebra, one may remember that a polynomial has the same number of roots as its degree (its highest exponent). Not all of these roots may be real numbers, some may have imaginary parts. Then, there is the question of whether Newton’s method can find these complex roots. How would we draw an imaginary tangent line? What does that even mean for a complex number?

The short answer is that we can use Newton’s method to find complex roots, but we lose the ability to think about the process graphically. We have to replace our simpler notion of a tangent line with the more abstract notion of a derivative from calculus, but everything works out in essentially the same way. We will need to go over a couple basics about complex numbers to continue on to the meat of this post.

Complex numbers

Complex numbers were actually introduced to deal with some conceptual difficulties people were having when finding roots of polynomials. In the quadratic equation, there is a square root, and sometimes the number inside the square root would be negative. This didn’t make sense at the time because nothing gives a negative number when you square it, so there is no such thing as the square root of a negative number! But it still made sense to use these questionable square roots in calculations, so what gives? Maybe some day I will dedicate a whole post to the beautiful math underlying the answer to this equation, but for now, we will stick with the simple answer: imaginary numbers are valid numbers.

The imaginary unit, , was put into use to tidy up the study of polynomials and their roots. This was a real breakthrough that has brought with it some of my favorite mathematics. What we will need here is the notion of the complex plane.

Complex numbers are numbers that have a real part and an imaginary part, so they can always be written as , where

and

are the real and imaginary parts, respectively. Therefore, they are in a sense two-dimensional numbers, and we can represent them on the complex plane.

The complex plane is just like the plane on which you would graph a function, except each point refers not to a pair of numbers, like , but to a single complex number,

. The horizontal axis represents the real part of the number, and the vertical axis represents the imaginary part. When we think of functions of real numbers, we can plot those in 2 dimensions because both the input and the output of the function are one-dimensional. With functions that take in complex numbers and output complex numbers, we would need 4 dimensions to fully represent what is going on. We will need to do something more creative to deal with this. But for now, we may move on.

Newton’s Fractals

Using the same algorithm described above, but in the complex numbers, we find that beautiful structures emerge from the behavior of points under this algorithm. For example, see the video below, where a grid of points in the complex plane are sent through Newton’s method on the polynomial until they reach a root. Once the points reach a root, they are each colored according to which root they approached and sent back to their original position. This forms a fractal.

The fractal in the above video is a very special case of a Newton fractal because the underlying function has 3-fold rotational symmetry, which is then also expressed in the fractal. By grabbing a root and moving it slightly, we can break this symmetry and see what effect this has on the resulting Newton fractal. Below is a video doing just that, but starting with the function , which is similar to the previous function, but with 5-fold rotational symmetry.

The structures that emerge from this symmetry breaking to me resemble phenomena in fluid mechanics, like Kelvin-Helmholtz instabilities or this fluid flow past a cylinder. However, these structures are not what you would “typically” find in a Newton fractal. In the next video, we construct a function with 5 roots, which are placed randomly on the screen, and then given physics so that they act like bouncy balls. It’s a silly thing to do, but the result is that we get to see more generic Newton fractals. Note that sometimes black regions form. These are not part of the fractal, those points just didn’t converge to a root in the time I gave them.

In the above video, there is a particular shape that keeps popping up, which I call a prickly pear, since it reminds me of the cactus when it starts to bear fruit. These are much more typical shapes in one of these fractals (at least when the underlying function is a polynomial).

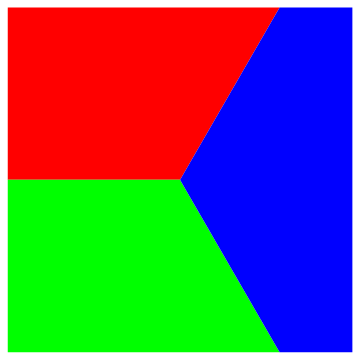

This is all great, but one might wonder why a fractal forms at all. Let’s start with the opposite question: when does Newton’s method not produce a fractal? I think it’s fairly clear that if the function is linear, then there is no fractal. If there is only one root, then the picture would just all be one color, which is a far cry from a fractal. The case of a quadratic polynomial also does not produce a fractal. The resulting image is just the complex plane cut in half, where all the numbers approach whichever of the two roots that they are closer to. We have seen that a cubic polynomial produces a fractal, so it seems that this is the threshold.

Let’s take another look at that highly symmetric cubic, . The roots of this function are

. As a first approximation to the fractal, let’s divide the complex plane into 3 sections in a similar way to what we observed in the video.

I want to pay particular attention to the boundary between the regions. On one side of the boundary, we are claiming that a number approaches one root, while on the other side a number approaches a different root. What happens right on the boundary, though? These boundary numbers must not approach any root at all, either going off to infinity or getting stuck in a loop. To investigate further, we can write down the equation for Newton’s method using calculus.

Notice that if we start with a real number, we always get a real number back. This is particularly important on the negative real numbers, where we drew a boundary between the two complex roots in our first approximation (the boundary between red and green). Those negative numbers would never become complex, so they only have 2 options: either approach the root , or don’t approach a root at all. Both options are taken, and we can see that in the following way. Some negative numbers become positive under an iteration of Newton’s method, for example

, and positive numbers will then approach the root

. On the other hand, some negative numbers become 0 after some number of iterations, and we can see that 0 is a bad point in the equation above, since we can’t divide by zero. As an example, the number

.

The first example of is not on the true boundary, whereas the second example,

is on the true boundary. Since the first example (and the other negative real numbers between 0 and

) are not on the boundary, then a small neighborhood around them must also converge to the root

. This region isn’t just a line after all. On the other hand, the points that are on the boundary are now surrounded by regions of points that approach every root, in other words they are surrounded by every color. This is in fact true of all boundary points, and this explains everything.

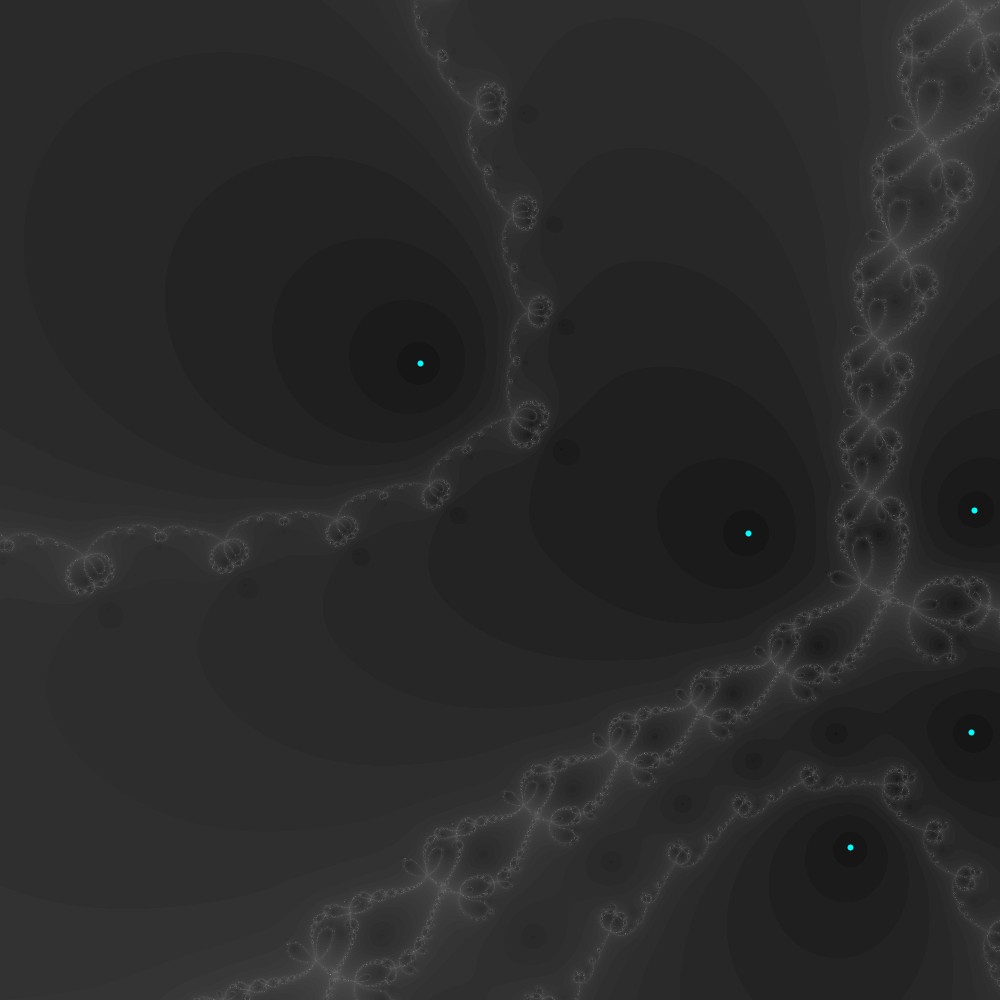

The quadratic function does not produce a fractal because it is easy to construct a boundary where every point on that boundary is surrounded by both colors, just a straight line will do. But once you have 3 colors, you can no longer have such a simple boundary. It is easy to make all three colors adjacent to a point, but to do so at every point requires structures at every scale, which is precisely what a fractal gives you. We can visualize this boundary by coloring in the fractal according to how many steps it takes to reach a root. Darker points reached the root quickly, so the lightest points show the boundary. The roots are also pictured in cyan.

Near the boundary, since there are structures at every scale, any little nudge to the starting point can change which root is reached. This sensitivity to minute changes of the initial conditions is one part of how mathematicians typically define chaos. Chaos theory is a beautiful branch of mathematics, and I’m sure I will devote many posts to chaotic systems in physics. I bring this up because of a deep connection between chaotic behavior and fractals. The consequences of this extreme sensitivity to initial conditions can seemingly only be encoded in something that has structure at all scales.

This is not the end of the story of Newton fractals. We have only considered fractals built from polynomials, but any function with zeroes can make a Newton fractal. I think I’ll post a follow up on these other Newton fractals next.

P.S. Not to bury the lead, but I have built a tool that allows you to make your own Newton fractal in the browser, which you can access here (disclaimer and source code). It allows you to place the roots, choose colors, zoom in an out, and visualize the boundary.

Very intriguing and fascinating post. The video “Building a Newton Fractal” got me thinking, what if we stacked the frames at each iteration and view it in 3d instead of one after other?

LikeLike

Very intriguing and fascinating post. The video “Building a Newton Fractal” got me thinking, what if we stacked the frames at each iteration and view it in 3d instead of one after other?

LikeLike